The Main Topics for Coursework or a Thesis Statement in Artificial Intelligence

Artificial Intelligence (AI) is changing the world, from machine learning and the Internet of Things to Robotics and Natural Language processing.

Research is needed to understand more about AI and how it will affect the future.

AI-powered machines are likely to replace humans in many fields and the consequences of this are still largely unknown.

There are many topics of vital importance to choose from if you’re a student trying to decide on a topic involving AI for your thesis.

Image source: Freepik.com

Machine learning (ML) as a Thesis Topic

Artificial intelligence enables machines to automatically learn a task from experience and improve performance without any human intervention.

Machines need high-quality data to start with. They are trained by building machine learning models using the data and different algorithms.

The algorithms depend on the type of data and the tasks that need automation.

A topic for your research could involve discussing wearable devices. They are powered by machine learning and are becoming increasingly popular.

You could discuss their relevance in fields like health and insurance as well as how they can help individuals to improve their daily routines and move towards a more healthy lifestyle.

Deep learning (DL) as a Thesis Topic

Deep Learning is a subset of ML where learning imitates the inner workings of the human brain. It uses artificial neural networks to process data and make decisions.

The web-like networks take a non-linear approach to processing data which is superior to traditional algorithms that take a linear approach.

Google’s RankBrain is an example of an artificial neural network.

Deep learning is driving many AI applications such as object recognition, playing computer games, controlling self-driving cars and language translation.

A research topic could involve discussing deep learning and its various applications.

Reinforcement learning (RL) as a Thesis Topic

Reinforcement learning is the closest form of learning to the way human beings learn. For instance, students learn from their mistakes and a process of trial-and-error.

There are many different ways to use AI in education to help students, such as using AI-powered tutors, customized learning and smart content.

RL works on a similar principle to learning from a process of trial-and-error. Google’s AlphaGo program beat the world champion of Go in 2017 by using RL.

Students who don’t yet have the skills to handle complex assignments can make use of various tools, writing apps and professional writers.

To find help with your student papers when you’re conducting research for a university, EduBirdie has free plagiarism checker and citations tools but professional writers who can take the pressure off you.

At U.K. EduBirdie , a professional thesis writer will finish your paper for you. It also offers editing and proofreading services at very reasonable prices.

Image source: Freepik.com

Natural language processing (NLP) as a Thesis Topic

This area of AI relates to how machines can learn to recognize and analyze human speech. Speech recognition, natural language translation and natural language generation are some of the areas of NLP.

With the help of NLP, systems can even read sentiment and predict which parts of the language are important. Revolutionary tools like IBM Watson, Google Translate, Speech Recognition and sentiment analysis show the importance of NLP in the daily lives of individuals.

NLP helps build intelligent systems, such as customer support applications like chatbots and AI in education is also a great example.

Chatbots use NLP and machine learning to interact with customers and solve their queries. Your research topic could relate to chatbots and their interaction with humans.

Computer vision (CV) as a Thesis Topic

Millions of images are uploaded daily on the internet. Computers are very good at certain tasks but they can struggle with simple tasks like being able to recognize and identify objects.

Computer vision is a field of AI that makes systems so smart that they can analyze and understand images. CV systems can even outperform humans now in some tasks like classifying visual objects.

One of the applications of computer vision is in autonomous vehicles that need to analyze images of surroundings in order to navigate.

A study topic could involve discussing computer vision and how using it allows smart systems to be built. Applications of computer vision could then be presented.

Recommender systems (RS) as a Thesis Topic

Recommender systems use algorithms to offer relevant suggestions to users. These may be suggestions on a TV show, a product, a service or even who to date.

You will receive many recommendations after you search for a particular product or browse a list of favorite movies. RS can base suggestions on your past behavior and past preferences, trends and the preferences of your peers.

A very relevant topic would be to explore the use of recommender systems in the field of e-commerce. Industry giants like Amazon are currently using recommender systems to help customers find the right products or services.

You could discuss their implementation and the type of results they bring to ecommerce businesses.

Robotics as a Thesis Topic

Robots can behave and perform the same actions as human beings, thanks to AI. They can act intelligently and even solve problems and learn in controlled environments.

For example, Kismet is a social interaction robot developed by MIT’s AI lab that can recognize human language and interact with humans.

Robots and AI are changing the way businesses work. Some people argue that this will have an adverse effect on humans as they are replaced by AI-powered machines.

A research topic could aim to understand to what extent businesses will be impacted by AI-powered machines and assess their future in different businesses.

There is an increase in the number of research papers being published in different areas of AI. If you’re a student wanting to come up with a topic involving artificial intelligence for your thesis, there are many vitally important sub-topics to choose from.

Each of these sub-topics provides plenty of opportunities for meaningful research into AI and new ideas on its application in the future as machines keep growing in intelligence.

About The Author

Paul Calderon

Paul Calderon is data security specialist working with a tech startup based in Silicon Valley. After work hours, he helps students studying for their computer science degrees or programming courses with essays, dissertations and term papers. When he isn’t doing any work, he likes playing tennis, cycling, and creating vlogs on local travel.

Leave a Reply Cancel Reply

This site uses Akismet to reduce spam. Learn how your comment data is processed .

What are effective thesis statements for an artificial intelligence research paper?

Effective thesis statements for an artificial intelligence research paper should be clear, specific, arguable, researchable, and relevant to the field of artificial intelligence. Here are some examples:

"The ethical implications of artificial intelligence in autonomous vehicles: balancing safety, privacy, and decision-making algorithms" [2]

- This thesis statement focuses on the ethical considerations surrounding the use of AI in autonomous vehicles, specifically addressing safety, privacy, and decision-making algorithms.

"Exploring the impact of artificial intelligence on job displacement and the future of work: a comparative analysis of industries" [1]

- This thesis statement examines the effects of AI on job displacement and the future of work, comparing its impact across different industries.

"Enhancing healthcare delivery through the integration of artificial intelligence: a case study of AI-powered diagnosis systems" [1]

- This thesis statement investigates how the integration of AI in healthcare can improve the delivery of medical services, with a specific focus on AI-powered diagnosis systems.

"Understanding the role of artificial intelligence in cybersecurity: analyzing the effectiveness of AI-based threat detection and prevention" [2]

- This thesis statement explores the role of AI in cybersecurity, specifically examining the effectiveness of AI-based threat detection and prevention methods.

"The implications of bias in artificial intelligence algorithms: addressing fairness and accountability in decision-making processes" [2]

- This thesis statement delves into the issue of bias in AI algorithms, highlighting the importance of fairness and accountability in decision-making processes.

Learn more:

- Artificial Intelligence & Machine Learning Thesis Statement Examples | AcademicHelp.net

- Thesis Statement Examples - Learn The Art From The Experts.

- How to Write a Better Thesis Statement Using AI (2023 Updated)

Continue the conversation

Explore more.

- Onsite training

3,000,000+ delegates

15,000+ clients

1,000+ locations

- KnowledgePass

- Log a ticket

01344203999 Available 24/7

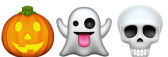

12 Best Artificial Intelligence Topics for Research in 2024

Explore the "12 Best Artificial Intelligence Topics for Research in 2024." Dive into the top AI research areas, including Natural Language Processing, Computer Vision, Reinforcement Learning, Explainable AI (XAI), AI in Healthcare, Autonomous Vehicles, and AI Ethics and Bias. Stay ahead of the curve and make informed choices for your AI research endeavours.

Exclusive 40% OFF

Training Outcomes Within Your Budget!

We ensure quality, budget-alignment, and timely delivery by our expert instructors.

Share this Resource

- AI Tools in Performance Marketing Training

- Deep Learning Course

- Natural Language Processing (NLP) Fundamentals with Python

- Machine Learning Course

- Duet AI for Workspace Training

Table of Contents

1) Top Artificial Intelligence Topics for Research

a) Natural Language Processing

b) Computer vision

c) Reinforcement Learning

d) Explainable AI (XAI)

e) Generative Adversarial Networks (GANs)

f) Robotics and AI

g) AI in healthcare

h) AI for social good

i) Autonomous vehicles

j) AI ethics and bias

2) Conclusion

Top Artificial Intelligence Topics for Research

This section of the blog will expand on some of the best Artificial Intelligence Topics for research.

Natural Language Processing

Natural Language Processing (NLP) is centred around empowering machines to comprehend, interpret, and even generate human language. Within this domain, three distinctive research avenues beckon:

1) Sentiment analysis: This entails the study of methodologies to decipher and discern emotions encapsulated within textual content. Understanding sentiments is pivotal in applications ranging from brand perception analysis to social media insights.

2) Language generation: Generating coherent and contextually apt text is an ongoing pursuit. Investigating mechanisms that allow machines to produce human-like narratives and responses holds immense potential across sectors.

3) Question answering systems: Constructing systems that can grasp the nuances of natural language questions and provide accurate, coherent responses is a cornerstone of NLP research. This facet has implications for knowledge dissemination, customer support, and more.

Computer Vision

Computer Vision, a discipline that bestows machines with the ability to interpret visual data, is replete with intriguing avenues for research:

1) Object detection and tracking: The development of algorithms capable of identifying and tracking objects within images and videos finds relevance in surveillance, automotive safety, and beyond.

2) Image captioning: Bridging the gap between visual and textual comprehension, this research area focuses on generating descriptive captions for images, catering to visually impaired individuals and enhancing multimedia indexing.

3) Facial recognition: Advancements in facial recognition technology hold implications for security, personalisation, and accessibility, necessitating ongoing research into accuracy and ethical considerations.

Reinforcement Learning

Reinforcement Learning revolves around training agents to make sequential decisions in order to maximise rewards. Within this realm, three prominent Artificial Intelligence Topics emerge:

1) Autonomous agents: Crafting AI agents that exhibit decision-making prowess in dynamic environments paves the way for applications like autonomous robotics and adaptive systems.

2) Deep Q-Networks (DQN): Deep Q-Networks, a class of reinforcement learning algorithms, remain under active research for refining value-based decision-making in complex scenarios.

3) Policy gradient methods: These methods, aiming to optimise policies directly, play a crucial role in fine-tuning decision-making processes across domains like gaming, finance, and robotics.

Explainable AI (XAI)

The pursuit of Explainable AI seeks to demystify the decision-making processes of AI systems. This area comprises Artificial Intelligence Topics such as:

1) Model interpretability: Unravelling the inner workings of complex models to elucidate the factors influencing their outputs, thus fostering transparency and accountability.

2) Visualising neural networks: Transforming abstract neural network structures into visual representations aids in comprehending their functionality and behaviour.

3) Rule-based systems: Augmenting AI decision-making with interpretable, rule-based systems holds promise in domains requiring logical explanations for actions taken.

Generative Adversarial Networks (GANs)

The captivating world of Generative Adversarial Networks (GANs) unfolds through the interplay of generator and discriminator networks, birthing remarkable research avenues:

1) Image generation: Crafting realistic images from random noise showcases the creative potential of GANs, with applications spanning art, design, and data augmentation.

2) Style transfer: Enabling the transfer of artistic styles between images, merging creativity and technology to yield visually captivating results.

3) Anomaly detection: GANs find utility in identifying anomalies within datasets, bolstering fraud detection, quality control, and anomaly-sensitive industries.

Robotics and AI

The synergy between Robotics and AI is a fertile ground for exploration, with Artificial Intelligence Topics such as:

1) Human-robot collaboration: Research in this arena strives to establish harmonious collaboration between humans and robots, augmenting industry productivity and efficiency.

2) Robot learning: By enabling robots to learn and adapt from their experiences, Researchers foster robots' autonomy and the ability to handle diverse tasks.

3) Ethical considerations: Delving into the ethical implications surrounding AI-powered robots helps establish responsible guidelines for their deployment.

AI in healthcare

AI presents a transformative potential within healthcare, spurring research into:

1) Medical diagnosis: AI aids in accurately diagnosing medical conditions, revolutionising early detection and patient care.

2) Drug discovery: Leveraging AI for drug discovery expedites the identification of potential candidates, accelerating the development of new treatments.

3) Personalised treatment: Tailoring medical interventions to individual patient profiles enhances treatment outcomes and patient well-being.

AI for social good

Harnessing the prowess of AI for Social Good entails addressing pressing global challenges:

1) Environmental monitoring: AI-powered solutions facilitate real-time monitoring of ecological changes, supporting conservation and sustainable practices.

2) Disaster response: Research in this area bolsters disaster response efforts by employing AI to analyse data and optimise resource allocation.

3) Poverty alleviation: Researchers contribute to humanitarian efforts and socioeconomic equality by devising AI solutions to tackle poverty.

Unlock the potential of Artificial Intelligence for effective Project Management with our Artificial Intelligence (AI) for Project Managers Course . Sign up now!

Autonomous vehicles

Autonomous Vehicles represent a realm brimming with potential and complexities, necessitating research in Artificial Intelligence Topics such as:

1) Sensor fusion: Integrating data from diverse sensors enhances perception accuracy, which is essential for safe autonomous navigation.

2) Path planning: Developing advanced algorithms for path planning ensures optimal routes while adhering to safety protocols.

3) Safety and ethics: Ethical considerations, such as programming vehicles to make difficult decisions in potential accident scenarios, require meticulous research and deliberation.

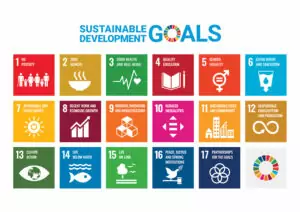

AI ethics and bias

Ethical underpinnings in AI drive research efforts in these directions:

1) Fairness in AI: Ensuring AI systems remain impartial and unbiased across diverse demographic groups.

2) Bias detection and mitigation: Identifying and rectifying biases present within AI models guarantees equitable outcomes.

3) Ethical decision-making: Developing frameworks that imbue AI with ethical decision-making capabilities aligns technology with societal values.

Future of AI

The vanguard of AI beckons Researchers to explore these horizons:

1) Artificial General Intelligence (AGI): Speculating on the potential emergence of AI systems capable of emulating human-like intelligence opens dialogues on the implications and challenges.

2) AI and creativity: Probing the interface between AI and creative domains, such as art and music, unveils the coalescence of human ingenuity and technological prowess.

3) Ethical and regulatory challenges: Researching the ethical dilemmas and regulatory frameworks underpinning AI's evolution fortifies responsible innovation.

AI and education

The intersection of AI and Education opens doors to innovative learning paradigms:

1) Personalised learning: Developing AI systems that adapt educational content to individual learning styles and paces.

2) Intelligent tutoring systems: Creating AI-driven tutoring systems that provide targeted support to students.

3) Educational data mining: Applying AI to analyse educational data for insights into learning patterns and trends.

Unleash the full potential of AI with our comprehensive Introduction to Artificial Intelligence Training . Join now!

Conclusion

The domain of AI is ever-expanding, rich with intriguing topics about Artificial Intelligence that beckon Researchers to explore, question, and innovate. Through the pursuit of these twelve diverse Artificial Intelligence Topics, we pave the way for not only technological advancement but also a deeper understanding of the societal impact of AI. By delving into these realms, Researchers stand poised to shape the trajectory of AI, ensuring it remains a force for progress, empowerment, and positive transformation in our world.

Unlock your full potential with our extensive Personal Development Training Courses. Join today!

Frequently Asked Questions

Upcoming data, analytics & ai resources batches & dates.

Fri 2nd Aug 2024

Fri 15th Nov 2024

Get A Quote

WHO WILL BE FUNDING THE COURSE?

My employer

By submitting your details you agree to be contacted in order to respond to your enquiry

- Business Analysis

- Lean Six Sigma Certification

Share this course

Our biggest spring sale.

We cannot process your enquiry without contacting you, please tick to confirm your consent to us for contacting you about your enquiry.

By submitting your details you agree to be contacted in order to respond to your enquiry.

We may not have the course you’re looking for. If you enquire or give us a call on 01344203999 and speak to our training experts, we may still be able to help with your training requirements.

Or select from our popular topics

- ITIL® Certification

- Scrum Certification

- Change Management Certification

- Business Analysis Courses

- Microsoft Azure Certification

- Microsoft Excel Courses

- Microsoft Project

- Explore more courses

Press esc to close

Fill out your contact details below and our training experts will be in touch.

Fill out your contact details below

Thank you for your enquiry!

One of our training experts will be in touch shortly to go over your training requirements.

Back to Course Information

Fill out your contact details below so we can get in touch with you regarding your training requirements.

* WHO WILL BE FUNDING THE COURSE?

Preferred Contact Method

No preference

Back to course information

Fill out your training details below

Fill out your training details below so we have a better idea of what your training requirements are.

HOW MANY DELEGATES NEED TRAINING?

HOW DO YOU WANT THE COURSE DELIVERED?

Online Instructor-led

Online Self-paced

WHEN WOULD YOU LIKE TO TAKE THIS COURSE?

Next 2 - 4 months

WHAT IS YOUR REASON FOR ENQUIRING?

Looking for some information

Looking for a discount

I want to book but have questions

One of our training experts will be in touch shortly to go overy your training requirements.

Your privacy & cookies!

Like many websites we use cookies. We care about your data and experience, so to give you the best possible experience using our site, we store a very limited amount of your data. Continuing to use this site or clicking “Accept & close” means that you agree to our use of cookies. Learn more about our privacy policy and cookie policy cookie policy .

We use cookies that are essential for our site to work. Please visit our cookie policy for more information. To accept all cookies click 'Accept & close'.

- Data Science

- Data Analysis

- Data Visualization

- Machine Learning

- Deep Learning

- Computer Vision

- Artificial Intelligence

- AI ML DS Interview Series

- AI ML DS Projects series

- Data Engineering

- Web Scrapping

8 Best Topics for Research and Thesis in Artificial Intelligence

- Top 5 Artificial Intelligence(AI) Predictions in 2020

- Top 7 Artificial Intelligence Frameworks to Learn in 2022

- What Are The Ethical Problems in Artificial Intelligence?

- Top 7 Artificial Intelligence and Machine Learning Trends For 2022

- The State of Artificial Intelligence in India and How Far is Too Far?

- 5 Dangers of Artificial Intelligence in the Future

- Top Challenges for Artificial Intelligence in 2020

- Difference Between Machine Learning and Artificial Intelligence

- What is Artificial Intelligence as a Service (AIaaS) in the Tech Industry?

- 10 Best Artificial Intelligence Project Ideas To Kick-Start Your Career

- Artificial Intelligence (AI) Researcher Jobs in China

- Applied Artificial Intelligence in Estonia : A global springboard for startups

- Types of Reasoning in Artificial Intelligence

- Mapping Techniques in Artificial Intelligence and Robotics

- Top 15 Artificial Intelligence(AI) Tools List

- Artificial Intelligence in Robotics

- Top Data Science with Artificial Intelligence Colleges in India

- Difference Between Data Science and Artificial Intelligence

- Difference Between Artificial Intelligence and Human Intelligence

Imagine a future in which intelligence is not restricted to humans!!! A future where machines can think as well as humans and work with them to create an even more exciting universe. While this future is still far away, Artificial Intelligence has still made a lot of advancement in these times. There is a lot of research being conducted in almost all fields of AI like Quantum Computing, Healthcare, Autonomous Vehicles, Internet of Things , Robotics , etc. So much so that there is an increase of 90% in the number of annually published research papers on Artificial Intelligence since 1996. Keeping this in mind, if you want to research and write a thesis based on Artificial Intelligence, there are many sub-topics that you can focus on. Some of these topics along with a brief introduction are provided in this article. We have also mentioned some published research papers related to each of these topics so that you can better understand the research process.

So without further ado, let’s see the different Topics for Research and Thesis in Artificial Intelligence!

1. Machine Learning

Machine Learning involves the use of Artificial Intelligence to enable machines to learn a task from experience without programming them specifically about that task. (In short, Machines learn automatically without human hand holding!!!) This process starts with feeding them good quality data and then training the machines by building various machine learning models using the data and different algorithms. The choice of algorithms depends on what type of data do we have and what kind of task we are trying to automate. However, generally speaking, Machine Learning Algorithms are divided into 3 types i.e. Supervised Machine Learning Algorithms, Unsupervised Machine Learning Algorithms , and Reinforcement Machine Learning Algorithms.

2. Deep Learning

Deep Learning is a subset of Machine Learning that learns by imitating the inner working of the human brain in order to process data and implement decisions based on that data. Basically, Deep Learning uses artificial neural networks to implement machine learning. These neural networks are connected in a web-like structure like the networks in the human brain (Basically a simplified version of our brain!). This web-like structure of artificial neural networks means that they are able to process data in a nonlinear approach which is a significant advantage over traditional algorithms that can only process data in a linear approach. An example of a deep neural network is RankBrain which is one of the factors in the Google Search algorithm.

3. Reinforcement Learning

Reinforcement Learning is a part of Artificial Intelligence in which the machine learns something in a way that is similar to how humans learn. As an example, assume that the machine is a student. Here the hypothetical student learns from its own mistakes over time (like we had to!!). So the Reinforcement Machine Learning Algorithms learn optimal actions through trial and error. This means that the algorithm decides the next action by learning behaviors that are based on its current state and that will maximize the reward in the future. And like humans, this works for machines as well! For example, Google’s AlphaGo computer program was able to beat the world champion in the game of Go (that’s a human!) in 2017 using Reinforcement Learning.

4. Robotics

Robotics is a field that deals with creating humanoid machines that can behave like humans and perform some actions like human beings. Now, robots can act like humans in certain situations but can they think like humans as well? This is where artificial intelligence comes in! AI allows robots to act intelligently in certain situations. These robots may be able to solve problems in a limited sphere or even learn in controlled environments. An example of this is Kismet , which is a social interaction robot developed at M.I.T’s Artificial Intelligence Lab. It recognizes the human body language and also our voice and interacts with humans accordingly. Another example is Robonaut , which was developed by NASA to work alongside the astronauts in space.

5. Natural Language Processing

It’s obvious that humans can converse with each other using speech but now machines can too! This is known as Natural Language Processing where machines analyze and understand language and speech as it is spoken (Now if you talk to a machine it may just talk back!). There are many subparts of NLP that deal with language such as speech recognition, natural language generation, natural language translation , etc. NLP is currently extremely popular for customer support applications, particularly the chatbot . These chatbots use ML and NLP to interact with the users in textual form and solve their queries. So you get the human touch in your customer support interactions without ever directly interacting with a human.

Some Research Papers published in the field of Natural Language Processing are provided here. You can study them to get more ideas about research and thesis on this topic.

6. Computer Vision

The internet is full of images! This is the selfie age, where taking an image and sharing it has never been easier. In fact, millions of images are uploaded and viewed every day on the internet. To make the most use of this huge amount of images online, it’s important that computers can see and understand images. And while humans can do this easily without a thought, it’s not so easy for computers! This is where Computer Vision comes in. Computer Vision uses Artificial Intelligence to extract information from images. This information can be object detection in the image, identification of image content to group various images together, etc. An application of computer vision is navigation for autonomous vehicles by analyzing images of surroundings such as AutoNav used in the Spirit and Opportunity rovers which landed on Mars.

7. Recommender Systems

When you are using Netflix, do you get a recommendation of movies and series based on your past choices or genres you like? This is done by Recommender Systems that provide you some guidance on what to choose next among the vast choices available online. A Recommender System can be based on Content-based Recommendation or even Collaborative Filtering. Content-Based Recommendation is done by analyzing the content of all the items. For example, you can be recommended books you might like based on Natural Language Processing done on the books. On the other hand, Collaborative Filtering is done by analyzing your past reading behavior and then recommending books based on that.

8. Internet of Things

Artificial Intelligence deals with the creation of systems that can learn to emulate human tasks using their prior experience and without any manual intervention. Internet of Things , on the other hand, is a network of various devices that are connected over the internet and they can collect and exchange data with each other. Now, all these IoT devices generate a lot of data that needs to be collected and mined for actionable results. This is where Artificial Intelligence comes into the picture. Internet of Things is used to collect and handle the huge amount of data that is required by the Artificial Intelligence algorithms. In turn, these algorithms convert the data into useful actionable results that can be implemented by the IoT devices.

Please Login to comment...

Similar reads.

- AI-ML-DS Blogs

Improve your Coding Skills with Practice

What kind of Experience do you want to share?

How to Write a Better Thesis Statement Using AI (2023 Updated)

Table of contents

Meredith Sell

With the exceptions of poetry and fiction, every piece of writing needs a thesis statement.

- Opinion pieces for the local newspaper? Yes.

- An essay for a college class? You betcha.

- A book about China’s Ming Dynasty? Absolutely.

All of these pieces of writing need a thesis statement that sums up what they’re about and tells the reader what to expect, whether you’re making an argument, describing something in detail, or exploring ideas.

But how do you write a thesis statement? How do you even come up with one?

This step-by-step guide will show you exactly how — and help you make sure every thesis statement you write has all the parts needed to be clear, coherent, and complete.

Let’s start by making sure we understand what a thesis is (and what it’s not).

What Is a Thesis Statement?

A thesis statement is a one or two sentence long statement that concisely describes your paper’s subject, angle or position — and offers a preview of the evidence or argument your essay will present.

A thesis is not:

- An exclamation

- A simple fact

Think of your thesis as the road map for your essay. It briefly charts where you’ll start (subject), what you’ll cover (evidence/argument), and where you’ll land (position, angle).

Writing a thesis early in your essay writing process can help you keep your writing focused, so you won’t get off-track describing something that has nothing to do with your central point. Your central point is your thesis, and the rest of your essay fleshes it out.

Get help writing your thesis statement with this FREE AI tool > Get help writing your thesis statement with this FREE AI tool >

Different Kinds of Papers Need Different Kinds of Theses

How you compose your thesis will depend on the type of essay you’re writing. For academic writing, there are three main kinds of essays:

- Persuasive, aka argumentative

- Expository, aka explanatory

A persuasive essay requires a thesis that clearly states the central stance of the paper , what the rest of the paper will argue in support of.

Paper books are superior to ebooks when it comes to form, function, and overall reader experience.

An expository essay’s thesis sets up the paper’s focus and angle — the paper’s unique take, what in particular it will be describing and why . The why element gives the reader a reason to read; it tells the reader why the topic matters.

Understanding the functional design of physical books can help ebook designers create digital reading experiences that usher readers into literary worlds without technological difficulties.

A narrative essay is similar to that of an expository essay, but it may be less focused on tangible realities and more on intangibles of, for example, the human experience.

The books I’ve read over the years have shaped me, opening me up to worlds and ideas and ways of being that I would otherwise know nothing about.

As you prepare to craft your thesis, think through the goal of your paper. Are you making an argument? Describing the chemical properties of hydrogen? Exploring your relationship with the outdoors? What do you want the reader to take away from reading your piece?

Make note of your paper’s goal and then walk through our thesis-writing process.

Now that you practically have a PhD in theses, let’s learn how to write one:

How to Write (and Develop) a Strong Thesis

If developing a thesis is stressing you out, take heart — basically no one has a strong thesis right away. Developing a thesis is a multi-step process that takes time, thought, and perhaps most important of all: research .

Tackle these steps one by one and you’ll soon have a thesis that’s rock-solid.

1. Identify your essay topic.

Are you writing about gardening? Sword etiquette? King Louis XIV?

With your assignment requirements in mind, pick out a topic (or two) and do some preliminary research . Read up on the basic facts of your topic. Identify a particular angle or focus that’s interesting to you. If you’re writing a persuasive essay, look for an aspect that people have contentious opinions on (and read our piece on persuasive essays to craft a compelling argument).

If your professor assigned a particular topic, you’ll still want to do some reading to make sure you know enough about the topic to pick your specific angle.

For those writing narrative essays involving personal experiences, you may need to do a combination of research and freewriting to explore the topic before honing in on what’s most compelling to you.

Once you have a clear idea of the topic and what interests you, go on to the next step.

2. Ask a research question.

You know what you’re going to write about, at least broadly. Now you just have to narrow in on an angle or focus appropriate to the length of your assignment. To do this, start by asking a question that probes deeper into your topic.

This question may explore connections between causes and effects, the accuracy of an assumption you have, or a value judgment you’d like to investigate, among others.

For example, if you want to write about gardening for a persuasive essay and you’re interested in raised garden beds, your question could be:

What are the unique benefits of gardening in raised beds versus on the ground? Is one better than the other?

Or if you’re writing about sword etiquette for an expository essay , you could ask:

How did sword etiquette in Europe compare to samurai sword etiquette in Japan?

How does medieval sword etiquette influence modern fencing?

Kickstart your curiosity and come up with a handful of intriguing questions. Then pick the two most compelling to initially research (you’ll discard one later).

3. Answer the question tentatively.

You probably have an initial thought of what the answer to your research question is. Write that down in as specific terms as possible. This is your working thesis .

Gardening in raised beds is preferable because you won’t accidentally awaken dormant weed seeds — and you can provide more fertile soil and protection from invasive species.

Medieval sword-fighting rituals are echoed in modern fencing etiquette.

Why is a working thesis helpful?

Both your research question and your working thesis will guide your research. It’s easy to start reading anything and everything related to your broad topic — but for a 4-, 10-, or even 20-page paper, you don’t need to know everything. You just need the relevant facts and enough context to accurately and clearly communicate to your reader.

Your working thesis will not be identical to your final thesis, because you don’t know that much just yet.

This brings us to our next step:

4. Research the question (and working thesis).

What do you need to find out in order to evaluate the strength of your thesis? What do you need to investigate to answer your research question more fully?

Comb through authoritative, trustworthy sources to find that information. And keep detailed notes.

As you research, evaluate the strengths and weaknesses of your thesis — and see what other opposing or more nuanced theses exist.

If you’re writing a persuasive essay, it may be helpful to organize information according to what does or does not support your thesis — or simply gather the information and see if it’s changing your mind. What new opinion do you have now that you’ve learned more about your topic and question? What discoveries have you made that discredit or support your initial thesis?

Raised garden beds prevent full maturity in certain plants — and are more prone to cold, heat, and drought.

If you’re writing an expository essay, use this research process to see if your initial idea holds up to the facts. And be on the lookout for other angles that would be more appropriate or interesting for your assignment.

Modern fencing doesn’t share many rituals with medieval swordplay.

With all this research under your belt, you can answer your research question in-depth — and you’ll have a clearer idea of whether or not your working thesis is anywhere near being accurate or arguable. What’s next?

5. Refine your thesis.

If you found that your working thesis was totally off-base, you’ll probably have to write a new one from scratch.

For a persuasive essay , maybe you found a different opinion far more compelling than your initial take. For an expository essay , maybe your initial assumption was completely wrong — could you flip your thesis around and inform your readers of what you learned?

Use what you’ve learned to rewrite or revise your thesis to be more accurate, specific, and compelling.

Raised garden beds appeal to many gardeners for the semblance of control they offer over what will and will not grow, but they are also more prone to changes in weather and air temperature and may prevent certain plants from reaching full maturity. All of this makes raised beds the worse option for ambitious gardeners.

While swordplay can be traced back through millennia, modern fencing has little in common with medieval combat where swordsmen fought to the death.

If you’ve been researching two separate questions and theses, now’s the time to evaluate which one is most interesting, compelling, or appropriate for your assignment. Did one thesis completely fall apart when faced with the facts? Did one fail to turn up any legitimate sources or studies? Choose the stronger question or the more interesting (revised) thesis, and discard the other.

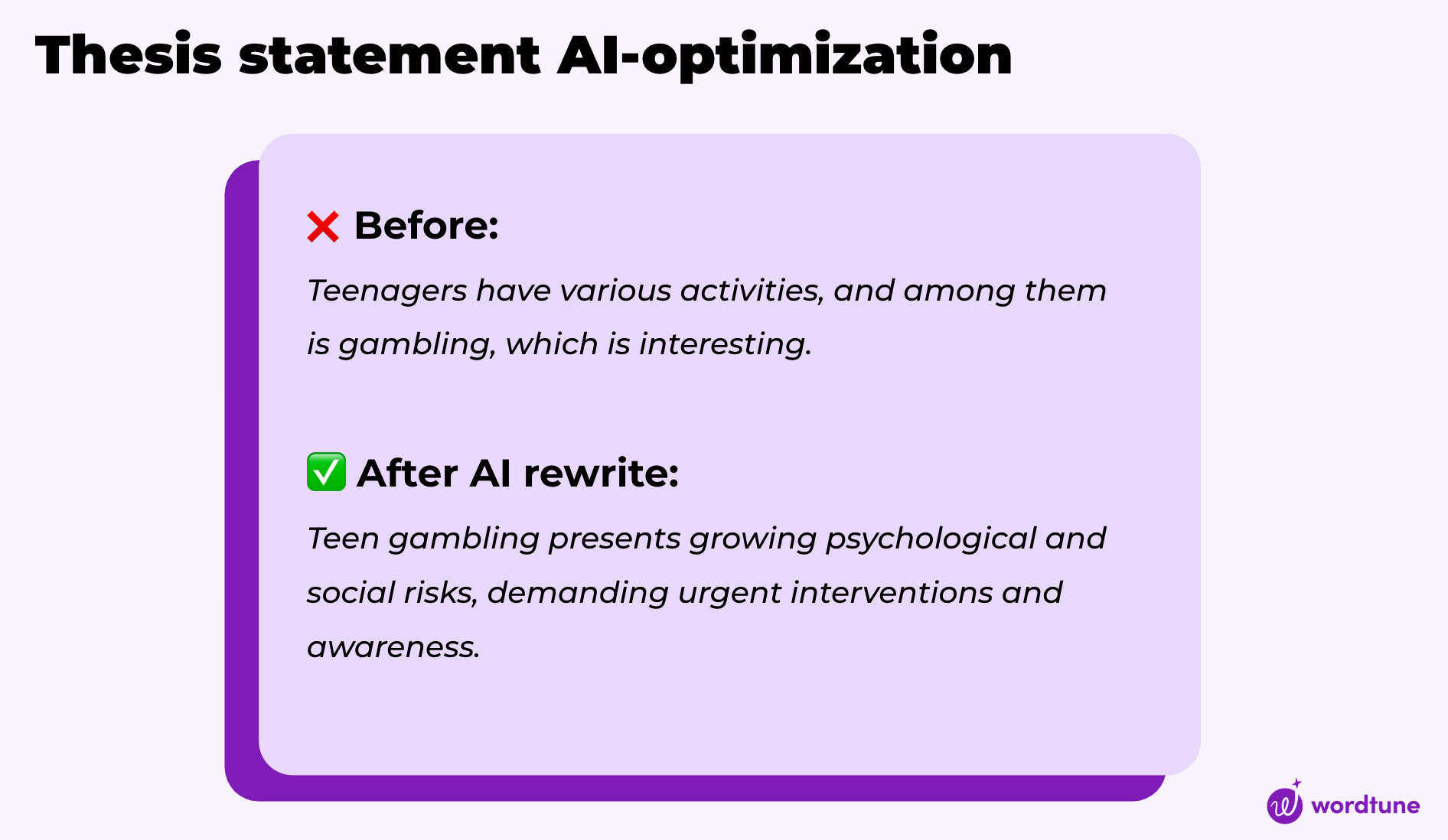

6. Get help from AI

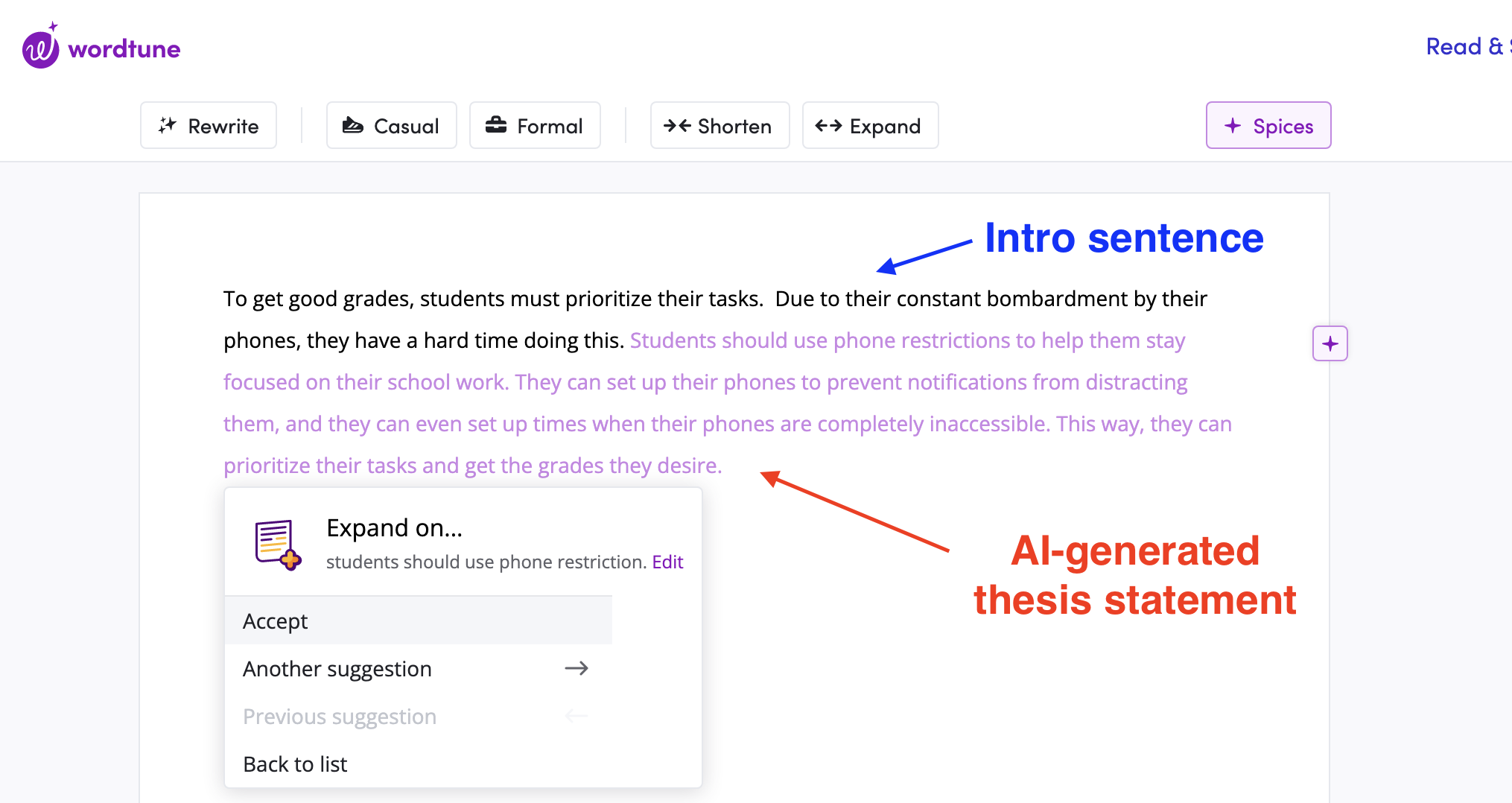

To make the process even easier, you can take advantage of Wordtune's generative AI capabilities to craft an effective thesis statement. You can take your current thesis statement and try the paraphrase tool to get suggestions for better ways of articulating it. WordTune will generate a set of related phrases, which you can select to help you refine your statement. You can also use Wordtune's suggestions to craft the thesis statement. Write your initial introduction sentence, then click '+' and select the explain suggestion. Browse through the suggestions until you have a statement that captures your idea perfectly.

Thesis Check: Look for These Three Elements

At this point, you should have a thesis that will set up an original, compelling essay, but before you set out to write that essay, make sure your thesis contains these three elements:

- Topic: Your thesis should clearly state the topic of your essay, whether swashbuckling pirates, raised garden beds, or methods of snow removal.

- Position or angle: Your thesis should zoom into the specific aspect of your topic that your essay will focus on, and briefly but boldly state your position or describe your angle.

- Summary of evidence and/or argument: In a concise phrase or two, your thesis should summarize the evidence and/or argument your essay will present, setting up your readers for what’s coming without giving everything away.

The challenge for you is communicating each of these elements in a sentence or two. But remember: Your thesis will come at the end of your intro, which will already have done some work to establish your topic and focus. Those aspects don’t need to be over explained in your thesis — just clearly mentioned and tied to your position and evidence.

Let’s look at our examples from earlier to see how they accomplish this:

Notice how:

- The topic is mentioned by name.

- The position or angle is clearly stated.

- The evidence or argument is set up, as well as the assumptions or opposing view that the essay will debunk.

Both theses prepare the reader for what’s coming in the rest of the essay:

- An argument to show that raised beds are actually a poor option for gardeners who want to grow thriving, healthy, resilient plants.

- An exposition of modern fencing in comparison with medieval sword fighting that shows how different they are.

Examine your refined thesis. Are all three elements present? If any are missing, make any additions or clarifications needed to correct it.

It’s Essay-Writing Time!

Now that your thesis is ready to go, you have the rest of your essay to think about. With the work you’ve already done to develop your thesis, you should have an idea of what comes next — but if you need help forming your persuasive essay’s argument, we’ve got a blog for that.

Share This Article:

How To Prepare For Studying Abroad (From Someone Who’s Done It)

Strategic Negotiation: How to Ask For A Raise Over Email

.webp)

Most Popular

13 days ago

Into vs In to

11 days ago

Is Gen Z Actually Lazy? This Admission Consultant Doesn’t Think So

How to reference a movie in an essay, how to write a position paper, how do you spell, artificial intelligence & machine learning thesis statement examples.

freepik.com

Artificial Intelligence (AI) and Machine Learning (ML) are pioneering technologies driving innovation across various sectors. When composing a thesis in this dynamic field, it is essential to commence with a concise and precise thesis statement that encapsulates your research’s essence. Below are examples of good and bad thesis statements, each followed by an analysis illustrating their effectiveness or shortcomings.

Good Thesis Statement Examples

Specific and Clear: “This thesis will investigate the application of machine learning algorithms in predicting stock prices with a focus on the technology sector.” Unclear: “Machine learning can be used to predict stock prices.”

The good example is clear and specific, detailing the application area (stock price prediction) and narrowing the focus to the technology sector. In contrast, the bad statement is vague, lacking both specificity and a defined scope.

Arguable and Debatable: “Despite its benefits, the implementation of AI in hiring processes can inadvertently reinforce existing biases, thus exacerbating workplace inequality.” Dull: “AI in hiring has pros and cons.”

The good statement is debatable and presents a clear argument, highlighting the potential downside of AI in hiring. Meanwhile, the bad statement is indecisive and fails to present a clear argument or stance.

Researchable and Measurable: “This study explores the efficacy of deep learning in the early detection of breast cancer through the analysis of mammographic images.” Uninspiring: “AI can help detect diseases early.”

A good example is researchable and measurable, specifying the AI type (deep learning), application (early detection of breast cancer), and method (analysis of mammographic images). Conversely, the bad statement is too general and lacks specificity.

Bad Thesis Statement Examples

Overly Broad: “Artificial intelligence is changing the world.”

While true, this statement is overly broad, providing no clear direction or focus for research.

Lack of Clear Argument: “AI and ML are important in data analysis.”

This statement, while factual, lacks a clear argument or focus, not providing the reader with an understanding of the research’s purpose or direction.

Unoriginal and Unengaging: “AI is used in many areas like healthcare, finance, and technology.”

Though factual, this statement is unoriginal and unengaging, lacking a specific focus or claim to guide the research.

Crafting an effective thesis statement for AI and ML research necessitates clarity, specificity, and a well-defined argument. Good thesis statements serve as a robust foundation, guiding both the researcher and the reader through the research journey. Conversely, bad thesis statements are vague, broad, and lack a clear focus, which might misguide the research process. By considering the examples provided, students can adeptly craft thesis statements that not only encapsulate their research focus but also engage readers with compelling arguments in the ever-evolving fields of Artificial Intelligence and Machine Learning.

Follow us on Reddit for more insights and updates.

Comments (0)

Welcome to A*Help comments!

We’re all about debate and discussion at A*Help.

We value the diverse opinions of users, so you may find points of view that you don’t agree with. And that’s cool. However, there are certain things we’re not OK with: attempts to manipulate our data in any way, for example, or the posting of discriminative, offensive, hateful, or disparaging material.

Cancel reply

Your email address will not be published. Required fields are marked *

Save my name, email, and website in this browser for the next time I comment.

More from Thesis Statement Examples and Samples

Sep 30 2023

Gender & Sexuality Studies Thesis Statement Examples

Criminal Justice Reform Thesis Statement Examples

Sustainable Development Goals (SDGs) Thesis Statement Examples

Remember Me

What is your profession ? Student Teacher Writer Other

Forgotten Password?

Username or Email

Conclusions

Main navigation, related documents.

2019 Workshops

2020 Study Panel Charge

Download Full Report

AAAI 2022 Invited Talk

Stanford HAI Seminar 2023

The field of artificial intelligence has made remarkable progress in the past five years and is having real-world impact on people, institutions and culture. The ability of computer programs to perform sophisticated language- and image-processing tasks, core problems that have driven the field since its birth in the 1950s, has advanced significantly. Although the current state of AI technology is still far short of the field’s founding aspiration of recreating full human-like intelligence in machines, research and development teams are leveraging these advances and incorporating them into society-facing applications. For example, the use of AI techniques in healthcare is becoming a reality, and the brain sciences are both a beneficiary of and a contributor to AI advances. Old and new companies are investing money and attention to varying degrees to find ways to build on this progress and provide services that scale in unprecedented ways.

The field’s successes have led to an inflection point: It is now urgent to think seriously about the downsides and risks that the broad application of AI is revealing. The increasing capacity to automate decisions at scale is a double-edged sword; intentional deepfakes or simply unaccountable algorithms making mission-critical recommendations can result in people being misled, discriminated against, and even physically harmed. Algorithms trained on historical data are disposed to reinforce and even exacerbate existing biases and inequalities. Whereas AI research has traditionally been the purview of computer scientists and researchers studying cognitive processes, it has become clear that all areas of human inquiry, especially the social sciences, need to be included in a broader conversation about the future of the field. Minimizing the negative impacts on society and enhancing the positive requires more than one-shot technological solutions; keeping AI on track for positive outcomes relevant to society requires ongoing engagement and continual attention.

Looking ahead, a number of important steps need to be taken. Governments play a critical role in shaping the development and application of AI, and they have been rapidly adjusting to acknowledge the importance of the technology to science, economics, and the process of governing itself. But government institutions are still behind the curve, and sustained investment of time and resources will be needed to meet the challenges posed by rapidly evolving technology. In addition to regulating the most influential aspects of AI applications on society, governments need to look ahead to ensure the creation of informed communities. Incorporating understanding of AI concepts and implications into K-12 education is an example of a needed step to help prepare the next generation to live in and contribute to an equitable AI-infused world.

The AI research community itself has a critical role to play in this regard, learning how to share important trends and findings with the public in informative and actionable ways, free of hype and clear about the dangers and unintended consequences along with the opportunities and benefits. AI researchers should also recognize that complete autonomy is not the eventual goal for AI systems. Our strength as a species comes from our ability to work together and accomplish more than any of us could alone. AI needs to be incorporated into that community-wide system, with clear lines of communication between human and automated decision-makers. At the end of the day, the success of the field will be measured by how it has empowered all people, not by how efficiently machines devalue the very people we are trying to help.

Cite This Report

Michael L. Littman, Ifeoma Ajunwa, Guy Berger, Craig Boutilier, Morgan Currie, Finale Doshi-Velez, Gillian Hadfield, Michael C. Horowitz, Charles Isbell, Hiroaki Kitano, Karen Levy, Terah Lyons, Melanie Mitchell, Julie Shah, Steven Sloman, Shannon Vallor, and Toby Walsh. "Gathering Strength, Gathering Storms: The One Hundred Year Study on Artificial Intelligence (AI100) 2021 Study Panel Report." Stanford University, Stanford, CA, September 2021. Doc: http://ai100.stanford.edu/2021-report. Accessed: September 16, 2021.

Report Authors

AI100 Standing Committee and Study Panel

© 2021 by Stanford University. Gathering Strength, Gathering Storms: The One Hundred Year Study on Artificial Intelligence (AI100) 2021 Study Panel Report is made available under a Creative Commons Attribution-NoDerivatives 4.0 License (International): https://creativecommons.org/licenses/by-nd/4.0/ .

The Future of AI Research: 20 Thesis Ideas for Undergraduate Students in Machine Learning and Deep Learning for 2023!

A comprehensive guide for crafting an original and innovative thesis in the field of ai..

By Aarafat Islam on 2023-01-11

“The beauty of machine learning is that it can be applied to any problem you want to solve, as long as you can provide the computer with enough examples.” — Andrew Ng

This article provides a list of 20 potential thesis ideas for an undergraduate program in machine learning and deep learning in 2023. Each thesis idea includes an introduction , which presents a brief overview of the topic and the research objectives . The ideas provided are related to different areas of machine learning and deep learning, such as computer vision, natural language processing, robotics, finance, drug discovery, and more. The article also includes explanations, examples, and conclusions for each thesis idea, which can help guide the research and provide a clear understanding of the potential contributions and outcomes of the proposed research. The article also emphasized the importance of originality and the need for proper citation in order to avoid plagiarism.

1. Investigating the use of Generative Adversarial Networks (GANs) in medical imaging: A deep learning approach to improve the accuracy of medical diagnoses.

Introduction: Medical imaging is an important tool in the diagnosis and treatment of various medical conditions. However, accurately interpreting medical images can be challenging, especially for less experienced doctors. This thesis aims to explore the use of GANs in medical imaging, in order to improve the accuracy of medical diagnoses.

2. Exploring the use of deep learning in natural language generation (NLG): An analysis of the current state-of-the-art and future potential.

Introduction: Natural language generation is an important field in natural language processing (NLP) that deals with creating human-like text automatically. Deep learning has shown promising results in NLP tasks such as machine translation, sentiment analysis, and question-answering. This thesis aims to explore the use of deep learning in NLG and analyze the current state-of-the-art models, as well as potential future developments.

3. Development and evaluation of deep reinforcement learning (RL) for robotic navigation and control.

Introduction: Robotic navigation and control are challenging tasks, which require a high degree of intelligence and adaptability. Deep RL has shown promising results in various robotics tasks, such as robotic arm control, autonomous navigation, and manipulation. This thesis aims to develop and evaluate a deep RL-based approach for robotic navigation and control and evaluate its performance in various environments and tasks.

4. Investigating the use of deep learning for drug discovery and development.

Introduction: Drug discovery and development is a time-consuming and expensive process, which often involves high failure rates. Deep learning has been used to improve various tasks in bioinformatics and biotechnology, such as protein structure prediction and gene expression analysis. This thesis aims to investigate the use of deep learning for drug discovery and development and examine its potential to improve the efficiency and accuracy of the drug development process.

5. Comparison of deep learning and traditional machine learning methods for anomaly detection in time series data.

Introduction: Anomaly detection in time series data is a challenging task, which is important in various fields such as finance, healthcare, and manufacturing. Deep learning methods have been used to improve anomaly detection in time series data, while traditional machine learning methods have been widely used as well. This thesis aims to compare deep learning and traditional machine learning methods for anomaly detection in time series data and examine their respective strengths and weaknesses.

Photo by Joanna Kosinska on Unsplash

6. Use of deep transfer learning in speech recognition and synthesis.

Introduction: Speech recognition and synthesis are areas of natural language processing that focus on converting spoken language to text and vice versa. Transfer learning has been widely used in deep learning-based speech recognition and synthesis systems to improve their performance by reusing the features learned from other tasks. This thesis aims to investigate the use of transfer learning in speech recognition and synthesis and how it improves the performance of the system in comparison to traditional methods.

7. The use of deep learning for financial prediction.

Introduction: Financial prediction is a challenging task that requires a high degree of intelligence and adaptability, especially in the field of stock market prediction. Deep learning has shown promising results in various financial prediction tasks, such as stock price prediction and credit risk analysis. This thesis aims to investigate the use of deep learning for financial prediction and examine its potential to improve the accuracy of financial forecasting.

8. Investigating the use of deep learning for computer vision in agriculture.

Introduction: Computer vision has the potential to revolutionize the field of agriculture by improving crop monitoring, precision farming, and yield prediction. Deep learning has been used to improve various computer vision tasks, such as object detection, semantic segmentation, and image classification. This thesis aims to investigate the use of deep learning for computer vision in agriculture and examine its potential to improve the efficiency and accuracy of crop monitoring and precision farming.

9. Development and evaluation of deep learning models for generative design in engineering and architecture.

Introduction: Generative design is a powerful tool in engineering and architecture that can help optimize designs and reduce human error. Deep learning has been used to improve various generative design tasks, such as design optimization and form generation. This thesis aims to develop and evaluate deep learning models for generative design in engineering and architecture and examine their potential to improve the efficiency and accuracy of the design process.

10. Investigating the use of deep learning for natural language understanding.

Introduction: Natural language understanding is a complex task of natural language processing that involves extracting meaning from text. Deep learning has been used to improve various NLP tasks, such as machine translation, sentiment analysis, and question-answering. This thesis aims to investigate the use of deep learning for natural language understanding and examine its potential to improve the efficiency and accuracy of natural language understanding systems.

Photo by UX Indonesia on Unsplash

11. Comparing deep learning and traditional machine learning methods for image compression.

Introduction: Image compression is an important task in image processing and computer vision. It enables faster data transmission and storage of image files. Deep learning methods have been used to improve image compression, while traditional machine learning methods have been widely used as well. This thesis aims to compare deep learning and traditional machine learning methods for image compression and examine their respective strengths and weaknesses.

12. Using deep learning for sentiment analysis in social media.

Introduction: Sentiment analysis in social media is an important task that can help businesses and organizations understand their customers’ opinions and feedback. Deep learning has been used to improve sentiment analysis in social media, by training models on large datasets of social media text. This thesis aims to use deep learning for sentiment analysis in social media, and evaluate its performance against traditional machine learning methods.

13. Investigating the use of deep learning for image generation.

Introduction: Image generation is a task in computer vision that involves creating new images from scratch or modifying existing images. Deep learning has been used to improve various image generation tasks, such as super-resolution, style transfer, and face generation. This thesis aims to investigate the use of deep learning for image generation and examine its potential to improve the quality and diversity of generated images.

14. Development and evaluation of deep learning models for anomaly detection in cybersecurity.

Introduction: Anomaly detection in cybersecurity is an important task that can help detect and prevent cyber-attacks. Deep learning has been used to improve various anomaly detection tasks, such as intrusion detection and malware detection. This thesis aims to develop and evaluate deep learning models for anomaly detection in cybersecurity and examine their potential to improve the efficiency and accuracy of cybersecurity systems.

15. Investigating the use of deep learning for natural language summarization.

Introduction: Natural language summarization is an important task in natural language processing that involves creating a condensed version of a text that preserves its main meaning. Deep learning has been used to improve various natural language summarization tasks, such as document summarization and headline generation. This thesis aims to investigate the use of deep learning for natural language summarization and examine its potential to improve the efficiency and accuracy of natural language summarization systems.

Photo by Windows on Unsplash

16. Development and evaluation of deep learning models for facial expression recognition.

Introduction: Facial expression recognition is an important task in computer vision and has many practical applications, such as human-computer interaction, emotion recognition, and psychological studies. Deep learning has been used to improve facial expression recognition, by training models on large datasets of images. This thesis aims to develop and evaluate deep learning models for facial expression recognition and examine their performance against traditional machine learning methods.

17. Investigating the use of deep learning for generative models in music and audio.

Introduction: Music and audio synthesis is an important task in audio processing, which has many practical applications, such as music generation and speech synthesis. Deep learning has been used to improve generative models for music and audio, by training models on large datasets of audio data. This thesis aims to investigate the use of deep learning for generative models in music and audio and examine its potential to improve the quality and diversity of generated audio.

18. Study the comparison of deep learning models with traditional algorithms for anomaly detection in network traffic.

Introduction: Anomaly detection in network traffic is an important task that can help detect and prevent cyber-attacks. Deep learning models have been used for this task, and traditional methods such as clustering and rule-based systems are widely used as well. This thesis aims to compare deep learning models with traditional algorithms for anomaly detection in network traffic and analyze the trade-offs between the models in terms of accuracy and scalability.

19. Investigating the use of deep learning for improving recommender systems.

Introduction: Recommender systems are widely used in many applications such as online shopping, music streaming, and movie streaming. Deep learning has been used to improve the performance of recommender systems, by training models on large datasets of user-item interactions. This thesis aims to investigate the use of deep learning for improving recommender systems and compare its performance with traditional content-based and collaborative filtering approaches.

20. Development and evaluation of deep learning models for multi-modal data analysis.

Introduction: Multi-modal data analysis is the task of analyzing and understanding data from multiple sources such as text, images, and audio. Deep learning has been used to improve multi-modal data analysis, by training models on large datasets of multi-modal data. This thesis aims to develop and evaluate deep learning models for multi-modal data analysis and analyze their potential to improve performance in comparison to single-modal models.

I hope that this article has provided you with a useful guide for your thesis research in machine learning and deep learning. Remember to conduct a thorough literature review and to include proper citations in your work, as well as to be original in your research to avoid plagiarism. I wish you all the best of luck with your thesis and your research endeavors!

Continue Learning

Znote ai: the perfect sandbox for prototyping and deploying code, prompt engineering: how to turn your words into works of art, 2050: what ai foresees for the future world.

Exploring the future of AI and its potential impact on various aspects of our world by the year 2050.

Are We Building AI systems that Learned to Lie to Us?

DeepFakes = DeepLearning + Fake

Art Generating AI

Building a rag-based conversational chatbot with langflow and streamlit.

Learn how to build a chatbot that leverages Retrieval Augmented Generation (RAG) in 20 minutes

- LibGuides

- A-Z List

- Help

Artificial Intelligence

- Background Information

- Getting started

- Browse Journals

- Dissertations & Theses

- Datasets and Repositories

- Research Data Management 101

- Scientific Writing

- Find Videos

- Related Topics

- Quick Links

- Ask Us/Contact Us

FIU dissertations

Non-FIU dissertations

Many universities provide full-text access to their dissertations via a digital repository. If you know the title of a particular dissertation or thesis, try doing a Google search.

Aims to be the best possible resource for finding open access graduate theses and dissertations published around the world with metadata from over 800 colleges, universities, and research institutions. Currently, indexes over 1 million theses and dissertations.

This is a discovery service for open access research theses awarded by European universities.

A union catalog of Canadian theses and dissertations, in both electronic and analog formats, is available through the search interface on this portal.

There are currently more than 90 countries and over 1200 institutions represented. CRL has catalog records for over 800,000 foreign doctoral dissertations.

An international collaborative resource, the NDLTD Union Catalog contains more than one million records of electronic theses and dissertations. Use BASE, the VTLS Visualizer or any of the geographically specific search engines noted lower on their webpage.

Indexes doctoral dissertations and masters' theses in all areas of academic research includes international coverage.

ProQuest Dissertations & Theses global

Related Sites

- << Previous: Browse Journals

- Next: Datasets and Repositories >>

- Last Updated: Apr 4, 2024 8:33 AM

- URL: https://library.fiu.edu/artificial-intelligence

Information

Fiu libraries floorplans, green library, modesto a. maidique campus, hubert library, biscayne bay campus.

Directions: Green Library, MMC

Directions: Hubert Library, BBC

Completed Theses

State space search solves navigation tasks and many other real world problems. Heuristic search, especially greedy best-first search, is one of the most successful algorithms for state space search. We improve the state of the art in heuristic search in three directions.

In Part I, we present methods to train neural networks as powerful heuristics for a given state space. We present a universal approach to generate training data using random walks from a (partial) state. We demonstrate that our heuristics trained for a specific task are often better than heuristics trained for a whole domain. We show that the performance of all trained heuristics is highly complementary. There is no clear pattern, which trained heuristic to prefer for a specific task. In general, model-based planners still outperform planners with trained heuristics. But our approaches exceed the model-based algorithms in the Storage domain. To our knowledge, only once before in the Spanner domain, a learning-based planner exceeded the state-of-the-art model-based planners.

A priori, it is unknown whether a heuristic, or in the more general case a planner, performs well on a task. Hence, we trained online portfolios to select the best planner for a task. Today, all online portfolios are based on handcrafted features. In Part II, we present new online portfolios based on neural networks, which receive the complete task as input, and not just a few handcrafted features. Additionally, our portfolios can reconsider their choices. Both extensions greatly improve the state-of-the-art of online portfolios. Finally, we show that explainable machine learning techniques, as the alternative to neural networks, are also good online portfolios. Additionally, we present methods to improve our trust in their predictions.

Even if we select the best search algorithm, we cannot solve some tasks in reasonable time. We can speed up the search if we know how it behaves in the future. In Part III, we inspect the behavior of greedy best-first search with a fixed heuristic on simple tasks of a domain to learn its behavior for any task of the same domain. Once greedy best-first search expanded a progress state, it expands only states with lower heuristic values. We learn to identify progress states and present two methods to exploit this knowledge. Building upon this, we extract the bench transition system of a task and generalize it in such a way that we can apply it to any task of the same domain. We can use this generalized bench transition system to split a task into a sequence of simpler searches.

In all three research directions, we contribute new approaches and insights to the state of the art, and we indicate interesting topics for future work.

Greedy best-first search (GBFS) is a sibling of A* in the family of best-first state-space search algorithms. While A* is guaranteed to find optimal solutions of search problems, GBFS does not provide any guarantees but typically finds satisficing solutions more quickly than A*. A classical result of optimal best-first search shows that A* with admissible and consistent heuristic expands every state whose f-value is below the optimal solution cost and no state whose f-value is above the optimal solution cost. Theoretical results of this kind are useful for the analysis of heuristics in different search domains and for the improvement of algorithms. For satisficing algorithms a similarly clear understanding is currently lacking. We examine the search behavior of GBFS in order to make progress towards such an understanding.

We introduce the concept of high-water mark benches, which separate the search space into areas that are searched by GBFS in sequence. High-water mark benches allow us to exactly determine the set of states that GBFS expands under at least one tie-breaking strategy. We show that benches contain craters. Once GBFS enters a crater, it has to expand every state in the crater before being able to escape.

Benches and craters allow us to characterize the best-case and worst-case behavior of GBFS in given search instances. We show that computing the best-case or worst-case behavior of GBFS is NP-complete in general but can be computed in polynomial time for undirected state spaces.

We present algorithms for extracting the set of states that GBFS potentially expands and for computing the best-case and worst-case behavior. We use the algorithms to analyze GBFS on benchmark tasks from planning competitions under a state-of-the-art heuristic. Experimental results reveal interesting characteristics of the heuristic on the given tasks and demonstrate the importance of tie-breaking in GBFS.

Classical planning tackles the problem of finding a sequence of actions that leads from an initial state to a goal. Over the last decades, planning systems have become significantly better at answering the question whether such a sequence exists by applying a variety of techniques which have become more and more complex. As a result, it has become nearly impossible to formally analyze whether a planning system is actually correct in its answers, and we need to rely on experimental evidence.

One way to increase trust is the concept of certifying algorithms, which provide a witness which justifies their answer and can be verified independently. When a planning system finds a solution to a problem, the solution itself is a witness, and we can verify it by simply applying it. But what if the planning system claims the task is unsolvable? So far there was no principled way of verifying this claim.

This thesis contributes two approaches to create witnesses for unsolvable planning tasks. Inductive certificates are based on the idea of invariants. They argue that the initial state is part of a set of states that we cannot leave and that contains no goal state. In our second approach, we define a proof system that proves in an incremental fashion that certain states cannot be part of a solution until it has proven that either the initial state or all goal states are such states.

Both approaches are complete in the sense that a witness exists for every unsolvable planning task, and can be verified efficiently (in respect to the size of the witness) by an independent verifier if certain criteria are met. To show their applicability to state-of-the-art planning techniques, we provide an extensive overview how these approaches can cover several search algorithms, heuristics and other techniques. Finally, we show with an experimental study that generating and verifying these explanations is not only theoretically possible but also practically feasible, thus making a first step towards fully certifying planning systems.

Heuristic search with an admissible heuristic is one of the most prominent approaches to solving classical planning tasks optimally. In the first part of this thesis, we introduce a new family of admissible heuristics for classical planning, based on Cartesian abstractions, which we derive by counterexample-guided abstraction refinement. Since one abstraction usually is not informative enough for challenging planning tasks, we present several ways of creating diverse abstractions. To combine them admissibly, we introduce a new cost partitioning algorithm, which we call saturated cost partitioning. It considers the heuristics sequentially and uses the minimum amount of costs that preserves all heuristic estimates for the current heuristic before passing the remaining costs to subsequent heuristics until all heuristics have been served this way.

In the second part, we show that saturated cost partitioning is strongly influenced by the order in which it considers the heuristics. To find good orders, we present a greedy algorithm for creating an initial order and a hill-climbing search for optimizing a given order. Both algorithms make the resulting heuristics significantly more accurate. However, we obtain the strongest heuristics by maximizing over saturated cost partitioning heuristics computed for multiple orders, especially if we actively search for diverse orders.

The third part provides a theoretical and experimental comparison of saturated cost partitioning and other cost partitioning algorithms. Theoretically, we show that saturated cost partitioning dominates greedy zero-one cost partitioning. The difference between the two algorithms is that saturated cost partitioning opportunistically reuses unconsumed costs for subsequent heuristics. By applying this idea to uniform cost partitioning we obtain an opportunistic variant that dominates the original. We also prove that the maximum over suitable greedy zero-one cost partitioning heuristics dominates the canonical heuristic and show several non-dominance results for cost partitioning algorithms. The experimental analysis shows that saturated cost partitioning is the cost partitioning algorithm of choice in all evaluated settings and it even outperforms the previous state of the art in optimal classical planning.

Classical planning is the problem of finding a sequence of deterministic actions in a state space that lead from an initial state to a state satisfying some goal condition. The dominant approach to optimally solve planning tasks is heuristic search, in particular A* search combined with an admissible heuristic. While there exist many different admissible heuristics, we focus on abstraction heuristics in this thesis, and in particular, on the well-established merge-and-shrink heuristics.